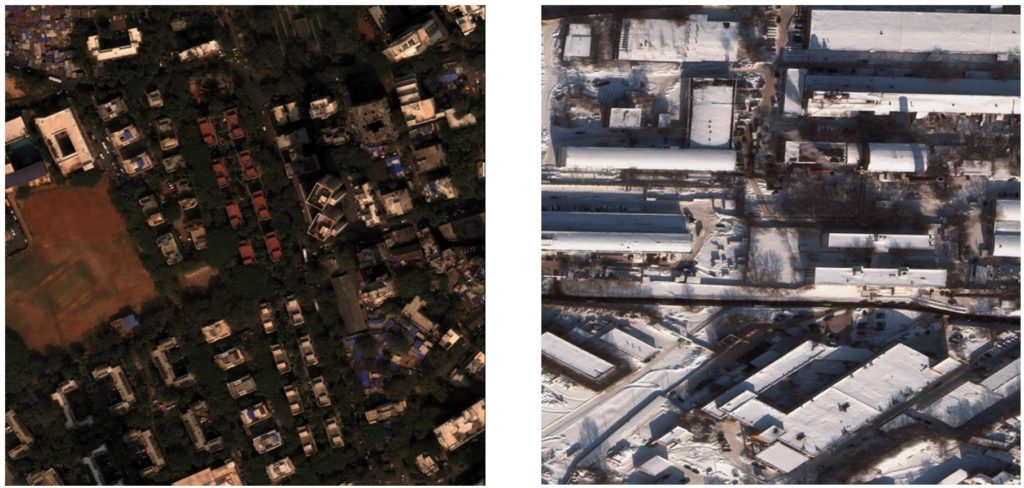

Occlusion can make it impossible to see some objects in off-nadir imagery. Why don’t we automatically map after natural disasters?Ī major barrier still exists to automated analysis of overhead imagery in disaster response scenarios: look angle. Alongside these developments, automated mapping challenges have seen steadily improving performance, as evidenced by the SpaceNet competition series: building extraction scores on imagery taken directly overhead improved almost three-fold from the first challenge in 2016 to the most recent challenge (discussed here) at the end of 2018. This is by no means a criticism of the HOT-OSM team or their fantastic community of labelers - they had 950,000 buildings and 30,000 km of roads to label! Even a preliminary automated labeling step, corrected manually afterward, could improve map delivery time.Ĭomputer vision-based map creation from overhead imagery has come a long way as deep learning models have grown from the nascency of AlexNet, implemented before TensorFlow even existed, to today’s advanced model architectures including Squeeze and Excitation Networks, Dual Path Networks, and advanced model training methods implemented in easy-to-use packages like Keras. But manual labeling is time-consuming and labor-intensive: even with 5,300 mappers working on the project, the first base map of Puerto Rico was not delivered until over a month after the hurricane hit, and the project was not officially concluded for another month. At present, this is done manually by teams in government, the private sector, or volunteer organizations like the Humanitarian Open Street Maps (HOT-OSM) team, who created a base map (roads and buildings) of Puerto Rico after Hurricane Maria at the request of the USA’s Federal Emergency Management Agency (FEMA). roads) needed to coordinate response efforts may be disrupted. Rapid disaster response could be aided by automated overhead image analysis: new maps are often essential after disasters, as infrastructure ( e.g. The current state of affairsĪs computer vision methods improve and overhead imagery becomes more accessible, scientists are exploring ways to unite these domains for many applications: among them, monitoring de-forestation and tracking population dynamics in refugee situations. The key takeaway: though algorithms are fantastic at mapping from “ideal” imagery taken directly overhead, the types of imagery that exist in urgent collection environments - such as after natural disasters - pose a currently unsolved problem for state-of-the-art computer vision algorithms. In this post, we’ll describe the challenges associated with automated mapping from overhead imagery. Challenge participants will be asked to track building construction over time, thereby directly assessing urbanization.A series of images taken by DigitalGlobe’s WorldView-2 satellite, from the SpaceNet Multi-View Overhead Imagery (MVOI) dataset described below, illustrating how look angle changes image appearance. The dataset will comprise over 40,000 square kilometers of imagery and exhaustive polygon labels of building footprints in the imagery, totaling over 10 million individual annotations. The competition centers around a new open source dataset of Planet satellite imagery mosaics, which includes 24 images (one per month) covering ~100 unique geographies.

In this challenge, participants will identify and track buildings in satellite imagery time series collected over rapidly urbanizing areas. The SpaceNet 7 Multi-Temporal Urban Development Challenge aims to help address this deficit and develop novel computer vision methods for non-video time series data. For example, quantifying population statistics is fundamental to 67 of the 232 United Nations Sustainable Development Goals, but the World Bank estimates that more than 100 countries currently lack effective Civil Registration systems. Satellite imagery analytics have numerous human development and disaster response applications, particularly when time series methods are involved.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed